This project focused on quality assurance during PACCAR’s migration from the Ixiasoft CMS to the Empolis CLS. I conducted detailed error analysis on proof-of-concept bookmaps, recording, categorizing, and suggesting fixes for migration errors.

Goals

The goals of the project included:

- ensuring migrated content was accurate, functional, and error-free

- categorizing and understanding error types introduced during migration

- supporting authors and managers with documentation of issues and fixes

Objectives

The primary objectives of this project were to:

- analyze post-migration files for errors

- document unique error types and their causes

- suggest fixes for recurring issues

- support long-term migration planning with actionable data

Outcome

The project achieved the following results:

Comprehensive Error Tracking

466 errors recorded, 73 unique types identified.

Root Cause Analysis

Researched underlying causes of recurring migration problems.

Actionable Fixes

Suggested clear solutions for metadata, linking, and reuse issues.

System Knowledge

Learned to navigate two complex systems in parallel.

Approach

Content migration is inherently high-risk—broken links, lost metadata, or structural errors can undermine usability, accuracy, and compliance. Careful analysis was required to ensure that migrated content could be trusted by authors and end users. I followed a structured, three-phase process:

Locate Errors

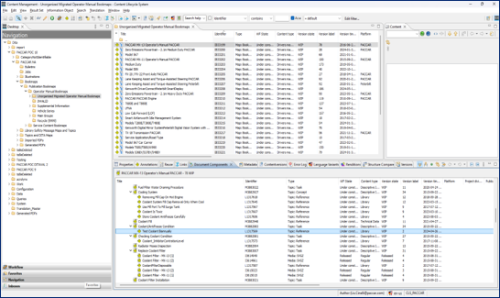

Using the RMI Migration Priority List provided by the Content Strategy Manager, I located 33 key bookmaps in the CLS.

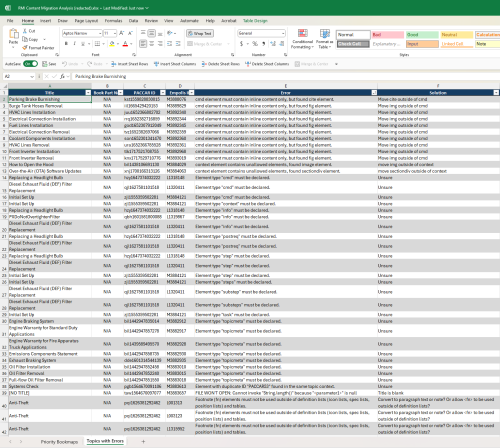

Each bookmap contained an average of 330 topics. I opened every topic and scanned systematically for errors, recording them in an Excel spreadsheet.

During dedicated work periods, I completed 2–4 bookmaps per day, slowing to 1–2 per day when balancing meetings or other tasks. Across all bookmaps, I recorded 466 total errors spanning 73 unique error codes

Understand Errors

When errors weren’t immediately clear, I compared the new topic in CLS with its original in CMS (Ixiasoft) to see what had changed in the migration.

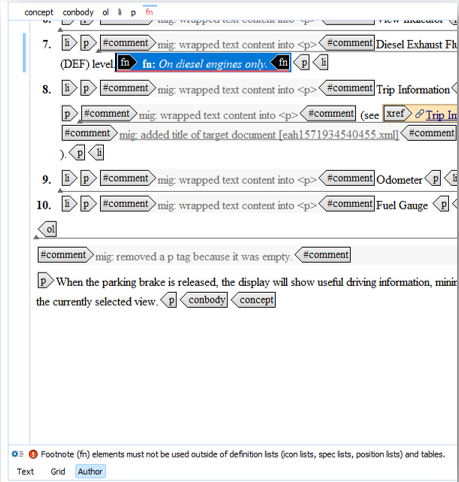

For each unique error, I wrote explanations of its likely cause. This included issues like broken cross-references, improper nesting of elements, missing images, and metadata mismatches.

I also tracked patterns, noting when the same issue recurred across multiple topics or bookmaps.

Propose Fixes

For each error type, I suggested specific corrective actions.

Examples included restructuring invalid elements (e.g., removing tables from within tags), correcting list nesting beyond the allowed maximum, or reassigning metadata to meet CLS standards.

These recommendations were logged alongside the errors in the Excel spreadsheet, creating a resource that authors and managers could use to guide cleanup.

This project shows my ability to execute a systematic QA process on large-scale documentation, moving from detection to root-cause analysis to actionable recommendations. It also highlights attention to detail, structured authoring knowledge, and the ability to compare legacy and modern systems in parallel.

Final Deliverable

The final deliverable is not a standalone file or document, but rather a set of logged errors, categorized codes, and recommended fixes captured directly within PACCAR’s internal systems. All project value resides in the CLS error log, the Excel analysis sheet used by the migration team, and the structured recommendations that inform ongoing cleanup. While there is no external file to display, this work provided actionable insights that directly supported the department’s transition from CMS to CLS